LLM is CPU. Context window is RAM. Andrej Karpathy captured something real with that analogy: the model processes, but the context is where information lives. What the model can reason about is bounded by what you put in that window, and how you structure it.

In 2026, Gartner formally defined context engineering as a core enterprise discipline, and the market responded with a new role: the Context Engineer. And despite what some headlines suggest, this is not about prompt engineering dying. It is about the scope of the problem expanding beyond what prompting alone can address.

What Context Engineering Actually Is

A prompt engineer optimizes interactions. One prompt, one response. The goal is to craft the question in a way that extracts maximum value from the model.

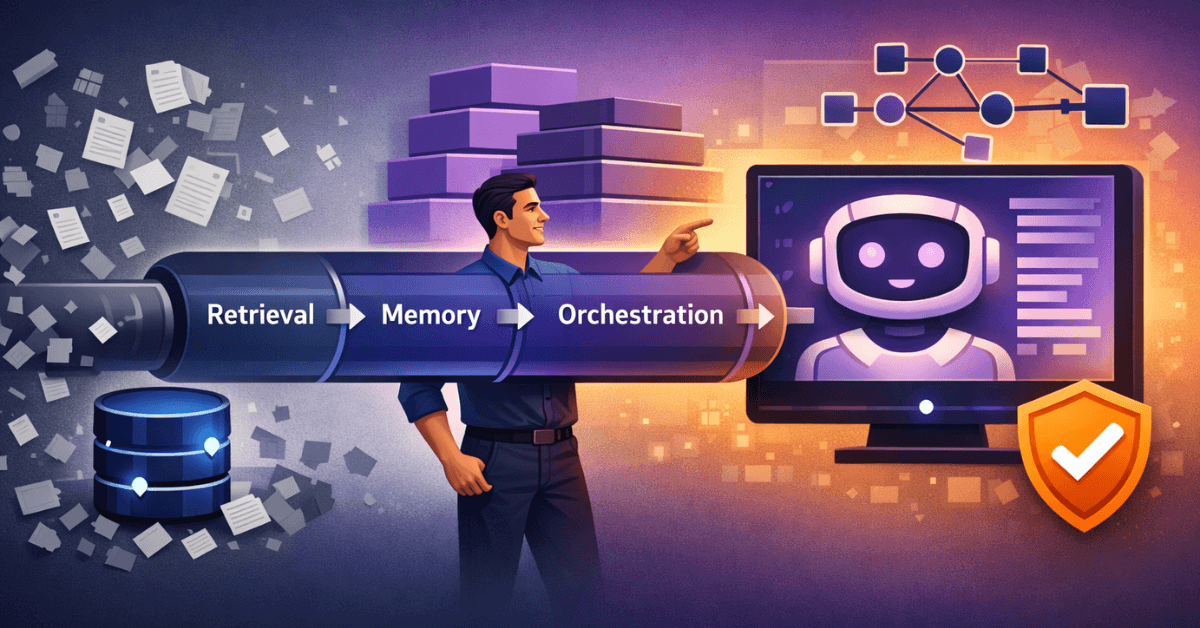

A context engineer designs the entire information environment. Memory systems, retrieval pipelines, guardrails, and the orchestration between all of them. The goal is to ensure that when the model needs information, it is available, accurate, and structured correctly.

The distinction matters because the problems are fundamentally different. Prompt engineering is a communication problem: how do you ask the right question? Context engineering is a systems problem: how do you build the infrastructure that makes the model useful at scale?

Consider a production RAG (Retrieval-Augmented Generation) system. A prompt engineer might write an excellent system prompt and a well-structured query template. But the quality of the final output depends heavily on things that happen before the model ever sees the prompt: which chunks of text were retrieved, how they were ranked, whether they contain conflicting information, how much of the context window they consume. These are context engineering decisions.

The market is already treating them as different skills, and the job descriptions are diverging accordingly.

The Architecture of Context

Context engineering operates across three distinct layers, and understanding each one is necessary to build reliable AI systems.

The retrieval layer is responsible for finding relevant information from external sources. This is where vector databases live, where semantic search happens, and where most of the performance tuning occurs. The basic pattern is embedding-based similarity search: documents are chunked, embedded into a high-dimensional vector space, and stored. At query time, the user input is also embedded, and the system retrieves the nearest neighbors by cosine similarity or dot product.

This sounds simple. In practice, chunking strategy alone has significant impact on retrieval quality. Fixed-size chunks are easy to implement but lose semantic coherence. Semantic chunking respects sentence boundaries but creates variable-length chunks that complicate batch processing. Hierarchical chunking indexes both document summaries and granular chunks, enabling multi-granularity retrieval. Each approach has trade-offs that depend on the domain and the query patterns.

The memory layer manages state across time. This includes short-term conversational memory (the recent exchange between user and system), long-term persistent memory (facts, preferences, and history stored across sessions), and working memory (information injected into the context window for the current task).

Memory management is a core challenge in context engineering because context windows are finite. A 128K token window sounds large until you account for system prompts, retrieved documents, conversation history, and output generation. Every token has a cost: latency, computational overhead, and the risk of attention dilution (the model’s ability to attend to relevant information decreases as the context grows).

The orchestration layer coordinates the flow between components. This is where routing logic, tool calls, multi-step reasoning, and error handling live. Frameworks like LangGraph model these workflows as directed graphs, where nodes are processing steps and edges represent conditional transitions. The context engineer decides which information flows where, what triggers retrieval, and how outputs from one step feed into the next.

The Stack Behind the Title

Context engineering emerged from real infrastructure needs. Companies building AI applications discovered that prompting alone does not scale. The tooling ecosystem reflects the actual problems developers are solving.

Orchestration frameworks like LangChain, LlamaIndex, and LangGraph coordinate complex workflows. LangGraph specifically introduced stateful, graph-based execution, which made it practical to build systems that maintain context across multiple model calls. The adoption numbers are not hype: they reflect production usage at scale, with the LangChain ecosystem alone reporting 300% growth in downloads between Q1 2024 and Q1 2025.

Vector databases are now core infrastructure. Pinecone, Weaviate, Qdrant, and pgvector serve different trade-offs. Pinecone is fully managed and optimizes for developer speed. Weaviate supports multimodal embeddings and hybrid search natively. Qdrant focuses on filtering efficiency at high cardinality. pgvector integrates directly with PostgreSQL, making it the practical default for teams that already operate relational databases. The choice depends on scale, existing infrastructure, and query patterns.

Reranking models add a second pass over retrieved results. Embedding similarity is a fast but imprecise signal. Cross-encoder rerankers compare the query and each candidate document directly, producing more accurate relevance scores. The cost is latency: reranking 20 documents adds hundreds of milliseconds. Whether that trade-off is worth it depends on the application.

Guardrails and validation pipelines ensure the system behaves within acceptable bounds. This goes beyond content filtering. Input validation catches malformed or adversarial queries before they reach the model. Output validation checks factual grounding (was this claim supported by retrieved documents?), format compliance (did the model follow the required schema?), and policy adherence (did the response stay within business rules?). In enterprise environments, these pipelines are not optional.

Architecture Patterns in Practice

Several patterns have emerged as reliable approaches to context management. Understanding them concretely is more useful than knowing their names.

RAG (Retrieval-Augmented Generation) is the baseline pattern. User query triggers retrieval, relevant documents are injected into the context, and the model generates a response grounded in that retrieved content. The key engineering decisions: chunking strategy, embedding model, similarity metric, number of retrieved documents, and whether to use reranking. Each decision has measurable impact on output quality.

CAG (Cache-Augmented Generation) preloads a fixed knowledge base into the model’s context using KV cache. It trades retrieval latency for the constraint that the entire knowledge base must fit within the context window (a trade-off that becomes increasingly viable as context windows expand). Useful when the relevant knowledge base is small enough to fit in the context window and queries are frequent enough that retrieval overhead is significant.

Multi-agent systems decompose complex tasks across specialized agents, each with its own context. A routing agent decides which specialized agent handles a query. Each specialist maintains focused context relevant to its domain. A synthesis agent integrates outputs. The challenge is inter-agent communication: what information passes between agents, in what format, and with what validation.

Agentic loops give the model access to tools (web search, code execution, API calls) and let it decide when to use them. The context accumulates tool call results, model reasoning, and intermediate outputs across multiple turns. Managing this growing context without losing coherence requires explicit compression and summarization strategies.

Why This Matters for Java Developers

The enterprise runs on Java. That is not changing. And when companies need to integrate AI into existing systems, they need developers who understand both the AI layer and the production infrastructure around it.

LangChain4j is the practical path for Java developers. It is not a simple port of the Python library. It is a framework designed with Java idioms in mind: type safety, dependency injection, annotation-based configuration. The @RegisterAiService annotation lets you define AI-powered interfaces that integrate with your existing CDI beans. Integration with Quarkus means you can add context-aware AI capabilities with native image compilation support, minimal startup time, and the deployment model your operations team already understands.

The core abstractions map to familiar Java patterns. A ChatMemory is a repository with a retention policy. A ContentRetriever is a query interface over an embedding store. An EmbeddingStore wraps a vector database behind a standard interface, whether that is an in-memory store for testing or a production pgvector deployment.

If you are a Java developer, you already have the foundation for context engineering. You understand connection pooling (relevant to embedding store throughput), asynchronous processing (critical for retrieval pipelines), and lifecycle management (essential for memory cleanup and context expiration). These are not tangential skills. They are directly applicable.

The practical starting point is building a domain-specific RAG system with LangChain4j, Quarkus, and pgvector. That combination covers the full stack: Java application layer, orchestration framework, and vector storage, all without leaving the JVM ecosystem.

Context Window Constraints and Trade-offs

One of the persistent misconceptions in this space is that larger context windows eliminate the need for context engineering. They do not. They change the problem.

A 1 million token context window does not mean you should put 1 million tokens into it. Attention is not uniform across the context. Research on long-context models consistently shows that information at the beginning and end of the context receives more attention than information in the middle (the “lost in the middle” problem). Structuring your context to place the most critical information at positions where the model attends to it reliably is itself a context engineering problem.

Larger windows also increase latency and cost. Processing 500K tokens takes significantly longer and costs more than processing 10K tokens. For latency-sensitive applications, this matters.

The practical implication: context engineering is about putting the right information in the context window, not the maximum information. Retrieval, filtering, and compression remain relevant skills regardless of how large the context window gets.

The Salary Signal

The market is signaling that context engineering is valued differently from general AI prompting skills.

Based on current market data aggregated across job postings and compensation platforms, base salaries range from $112K to $179K, with a median around $141K. Specialists with retrieval and orchestration expertise command 30% to 50% more than generalist AI roles. Senior positions at major technology companies reach $250K to $350K, particularly for roles that combine context engineering depth with production systems experience.

These numbers reflect the reality that context engineering requires system thinking, infrastructure knowledge, and AI understanding simultaneously. It is a narrower skill set than pure software engineering, but broader than pure prompt engineering.

The Transition Path

Prompt engineering is how most developers enter the AI space. You learn to craft effective prompts, experiment with temperature and sampling parameters, and get decent outputs. This is a reasonable starting point.

Context engineering is what happens when you need to scale. You realize prompts alone are insufficient when users ask questions that require information the model does not have. You need retrieval. You need memory across sessions. You need consistency when the same query comes from different users with different histories.

The transition requires a shift in thinking. Prompt engineering is about the immediate interaction. Context engineering is about the system that makes a sequence of interactions coherent and accurate. You are no longer just writing prompts. You are designing the information flow that makes those prompts effective.

For Java developers specifically, the transition path is more natural than it appears. The infrastructure problems in context engineering (reliability, latency, state management, integration with existing systems) are problems the Java ecosystem has solved multiple times. The domain-specific knowledge required is mostly new. The engineering discipline is familiar.

Where to Start

If you are a Java developer looking to build context engineering skills:

Start with LangChain4j. Build a retrieval-augmented system for a specific domain you already understand. The specificity matters: a generic chatbot teaches you less than a system that answers questions about a real document corpus with real quality requirements.

Learn how vector databases work at the implementation level. pgvector integrates with PostgreSQL, which most Java developers already operate. Understanding how approximate nearest neighbor search works, and why it produces non-deterministic results, matters for debugging retrieval failures.

Study retrieval patterns beyond basic similarity search. Understand the difference between dense retrieval (embedding-based), sparse retrieval (BM25-based), and hybrid approaches that combine both. Each has different failure modes. Hybrid approaches typically outperform either in isolation, but they require tuning the interpolation weight.

Instrument your pipelines. Build in observability from the start: log which documents were retrieved for each query, which chunks were used in the final context, and what the output was. Without this, you cannot systematically improve retrieval quality.

Understand guardrails as a first-class requirement, not an afterthought. Define what valid outputs look like for your application before you build. Build validation into the pipeline from the beginning, not as a layer added after launch.

The role is still defining itself, and the tooling is evolving rapidly. But the direction is clear: companies need developers who can design information environments for AI systems, not just prompt models.

The future of enterprise AI is not just about better prompts. It is about better context.

References

People & Concepts

- Andrej Karpathy on Context Engineering (X/Twitter)

- Gartner: Context Engineering — Why it’s Replacing Prompt Engineering for Enterprise AI

Research Papers

- Lost in the Middle: How Language Models Use Long Contexts (Liu et al., 2023) — arXiv

- Retrieval-Augmented Generation for Knowledge-Intensive NLP Tasks (Lewis et al., 2020) — arXiv

- Don’t Do RAG: When Cache-Augmented Generation is All You Need (2024) — arXiv

Orchestration Frameworks

- LangChain — Open Source LLM Framework

- LangGraph — Stateful Agent Orchestration Framework

- LlamaIndex — Framework for Context-Aware AI Agents

- LangChain4j — LLM Integration Library for Java

Vector Databases

- Pinecone — Managed Vector Database

- Weaviate — Open Source Vector Database with Hybrid Search

- Qdrant — High-Performance Vector Search Engine

- pgvector — Open Source Vector Similarity Search for PostgreSQL