I’m in São Paulo this week for the StartSe AI Festival 2026 (May 13 and 14, at Pro Magno Centro de Eventos). Context for those reading from outside Brazil: this is the largest AI gathering in the country right now. Around 4,000 people in the room, and a lineup that brought Anthropic, Google DeepMind, Microsoft, Genspark, ElevenLabs, McKinsey, and IBM to the same stage as Brazilian operators like iFood. If you build software for a living and want to feel where the industry is moving, this is the room.

What follows is a one-paragraph pass per talk, plus the bullets I’m keeping. I’ll write longer follow-ups in the next few days.

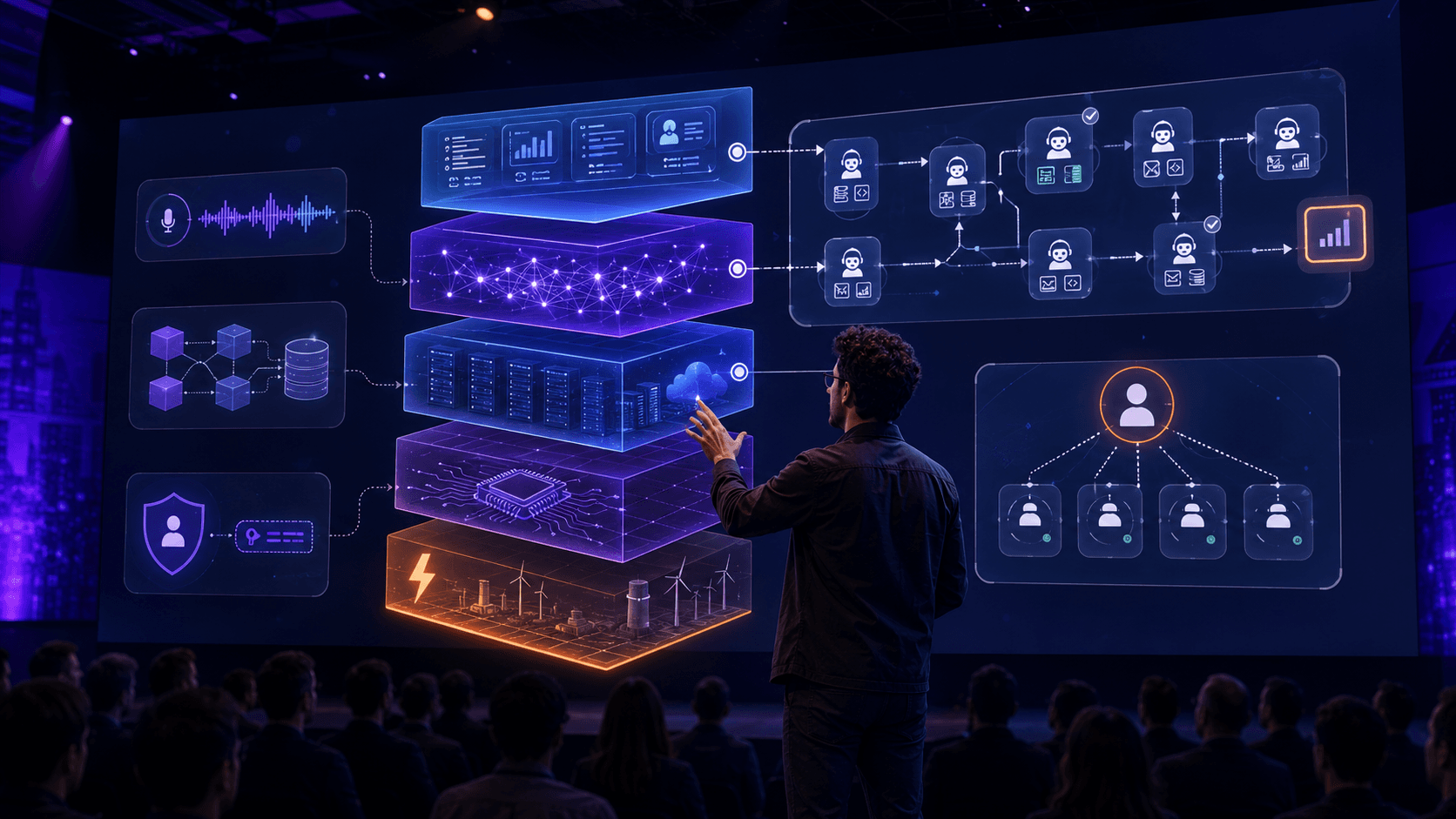

Cristiano Kruel, Chief Innovation Officer at StartSe, opened the festival and framed the AI economy as a five-layer stack to help the room locate themselves. He walked through the CAPEX behind it (roughly US$ 645 billion in 2026 across Microsoft, Meta, Amazon, Google and Oracle) and drew a parallel with the early commercial internet to argue we’re still very early.

- Five layers to read AI economically: energy, chips, infrastructure, models, applications.

- His take: the applications layer has barely started. Chatbots are not the destination.

- Protocols (MCP, A2A, ACP) will play the structural role TCP/IP played for the web. Whoever owns the protocol owns the game.

Henrique Savelli, Applied AI Lead at Anthropic, opened with a number: 90% of all code written inside Anthropic is now written by agents. He used that to introduce Anthropic’s enterprise lineup (Claude Code for engineers, Cowork for non-technical roles, Claude Design for design assets) and announced Managed Agents live on stage, a fully hosted agent layer running on Anthropic’s own infrastructure.

- Three-question framework before building an agent: is the deliverable well-defined, is the context connectable, is reviewing faster than redoing? Three yeses, real agent case.

- The agent equation he uses internally: agent = model + harness. Harness engineering is now a research priority at Anthropic, not just product work.

- Public cases he cited: Novo Nordisk reports 90% time savings on regulatory documentation, ServiceNow 95% on sales prep, Quanti 90% on commercial proposal time.

Priscyla Laham, President of Microsoft Brazil, framed Microsoft’s bet around the “Frontier Firm”: companies operated by AI and led by humans. Her message to engineers and executives in the room: AI is an amplifier. The business objective doesn’t change. The workforce composition does.

- Three stages of enterprise AI maturity: assistant, expert agents, autonomous agents with human oversight.

- 40% of Microsoft’s own software development is already done by agents. No layoffs reported; 4x feature velocity.

- Azure AI Foundry hosts 11,000+ models. The strategic call she made is interoperability and openness, because the winning model in six months won’t be the one you use today.

Justin Liu, co-founder and Chief Architect at Genspark, brings prior experience at Google, Facebook, and Baidu’s Xiaodu Technology. The company, led by CEO Eric Jing, hit US$ 50M ARR within 5 months of launch, surpassed US$ 200M ARR in 11 months, and reached a US$ 1.6B valuation in its Series B extension in March 2026. He used OpenAI’s five-level AGI ladder to argue most enterprises are stuck between Level 1 (chatbots) and Level 3 (real agents). His technical thesis: long-horizon agents need durable memory that lives outside the context window.

- Durable agents need three things: action, continuity, memory. Most coding agents only handle context-window memory, which collapses past 30 minutes or so.

- New job category to watch on LinkedIn: “10x AI specialist”. The human who stops waiting for definitions and starts running experiments.

- AI cannot own end-to-end responsibility. Humans stay accountable. Design permissions, traceability, and audit accordingly.

Piero Franceschi, CEO of StartSe, gave less of a tech update and more of a provocation: who do we become when machines are more productive than we are? He warned that “generative AI can become degenerative AI” when we outsource memory, attention, knowledge, and decision-making. The framing is what matters for engineers: technical skill alone is no longer enough.

- Three differentiating human traits in an agent world: courage, inventiveness, dialogue.

- Prompt pattern he recommends: think on paper for 15 minutes before opening the model. Then use the model to pressure-test your thinking, not to make you comfortable.

- Productivity and intelligence are decoupling. Being productive no longer requires being smart, and that changes which human qualities the market will reward.

Eduardo Villalba, Head of Marketing of ElevenLabs Brazil, traced the path from the Intel “tan tan tan tan” jingle and HBO’s static through Netflix’s “Tudum” to today’s synthetic voices, arguing voice is becoming a primary brand interface. Especially in Brazil, which, per his data, sends 4x more WhatsApp audio messages than India.

- Voice design pillars: who’s speaking, how they sound, how they move through the conversation, where the interaction happens.

- Production loop: briefing, prompting, testing, refining, implementing.

- Red flag he called out: building voice AI just to “look modern” produces a fancy IVR. Empathy and personality have to be deliberate design choices.

Peter Danenberg, Senior Software Engineer at Google DeepMind, worked on Google Assistant, Bard and Gemini for nearly a decade. His talk was the most personal of the day. He told the story of his mother working for ChatGPT, literally months of unpaid labor labeling 50,000 family photos and Amazon orders for a chatbot that, in the end, couldn’t deliver the promised report. He used the story to introduce the “inversion of control” idea (humans working for AI instead of the other way around) and then showed how to flip the dynamic with a small but capable agent stack.

Rafael Siqueira, Partner at McKinsey & Company, leads Build by McKinsey in Latin America. His pitch: most companies are still treating AI as a tool when it is, in practice, a transformation of how software gets built. He laid out the new development cycle (humans orchestrate, agents execute) and the org changes that come with it.

- Humans orchestrate, agents execute. The work day extends to 24 hours because agents keep running while you sleep (he runs four Claude Code and Codex windows in parallel at home).

- New team shape: 3 to 5 builders shipping end to end outperform the 8 to 12 person multidisciplinary squad of the previous era. Specs replace tickets. Intention replaces documentation.

- New roles on the org chart: Agent Ops, AI Risk, security engineering for agents. Traditional QA, DevOps, and specialist tracks reshape around them.

Lucas Leung, Director at Oracle, sharpened the Vibe Coding vs Vibe Engineering split that Rafael had set up. Short version: Vibe Coding is improvising with LLMs (stand up comedy). Vibe Engineering is building structured, production ready systems with LLMs in the workflow (a full film). The discipline shift: from “make it work in the demo” to “make it scale in production”.

- Vibe Coding ships code that looks done. Vibe Engineering ships a system that stands up.

- Speed without control hides risk. Vibe Coding hides the risk. Vibe Engineering surfaces it.

- LLM assisted does not mean improvised. Structure, testing, and architecture still matter.

Isabella Piratininga, Director of Technology and Innovation at iFood, presented what is likely one of the largest production agentic deployment in Brazil: Ailo, iFood’s conversational agent, already serving a slice of the platform’s 65M monthly users. The point she kept hammering: behavior change is the bottleneck, and agentic products should be treated as a re-platforming.

- Five product principles she walked through: trust (built in micro wins), cost per task instead of cost per token, determinism (don’t reason through what you can route), evals on the middle of the chain (not just inputs and outputs), and onboarding (consistent, repeated small wins).

- Ordering on iFood is up to 50% faster via Ailo than through the traditional app. Around 2M users have touched it; 200K active per month.

- Takeaway for builders: an agent is not a feature on a roadmap. Plan for at least one rewrite. Ailo’s architecture has already been rebuilt and will be rebuilt again.

Marcelo Braga, President of IBM Brasil, closed Day 1 with IBM’s enterprise AI playbook. He anchored his talk in the IBM Enterprise 2030 study from the IBM Institute for Business Value and walked through the five themes IBM laid out the week before at Think 2026 in Boston: AI-native developers, agent identity management, multi-cloud AIOps, sovereignty, and data. He also introduced IBM Bob (the agentic development platform that went generally available at Think) and pointed to the recently closed Confluent acquisition (the commercial Kafka platform company, founded by Kafka’s original creators, around US$ 11 billion) as IBM’s bet on real-time data for AI.

- The gap between bug discovery and active attack has collapsed. A vulnerability surfaced in the morning becomes an active attack by afternoon. Production pipelines have to test the negative path and ship fixes inside the same day.

- Agent identity management is the next hard problem. Giving an agent your username and password works until a prompt injection turns the agent against you. Permissions need to be scoped to context, transaction volume, API window, and time of day.

- Token FinOps is now an operational concern. A one-page status update gets expanded to ten pages, then passed back through the model to compress. Multiply by every project and every team, and visibility problems hit before the bill arrives.

Day 2 starts in a few hours. More to come.