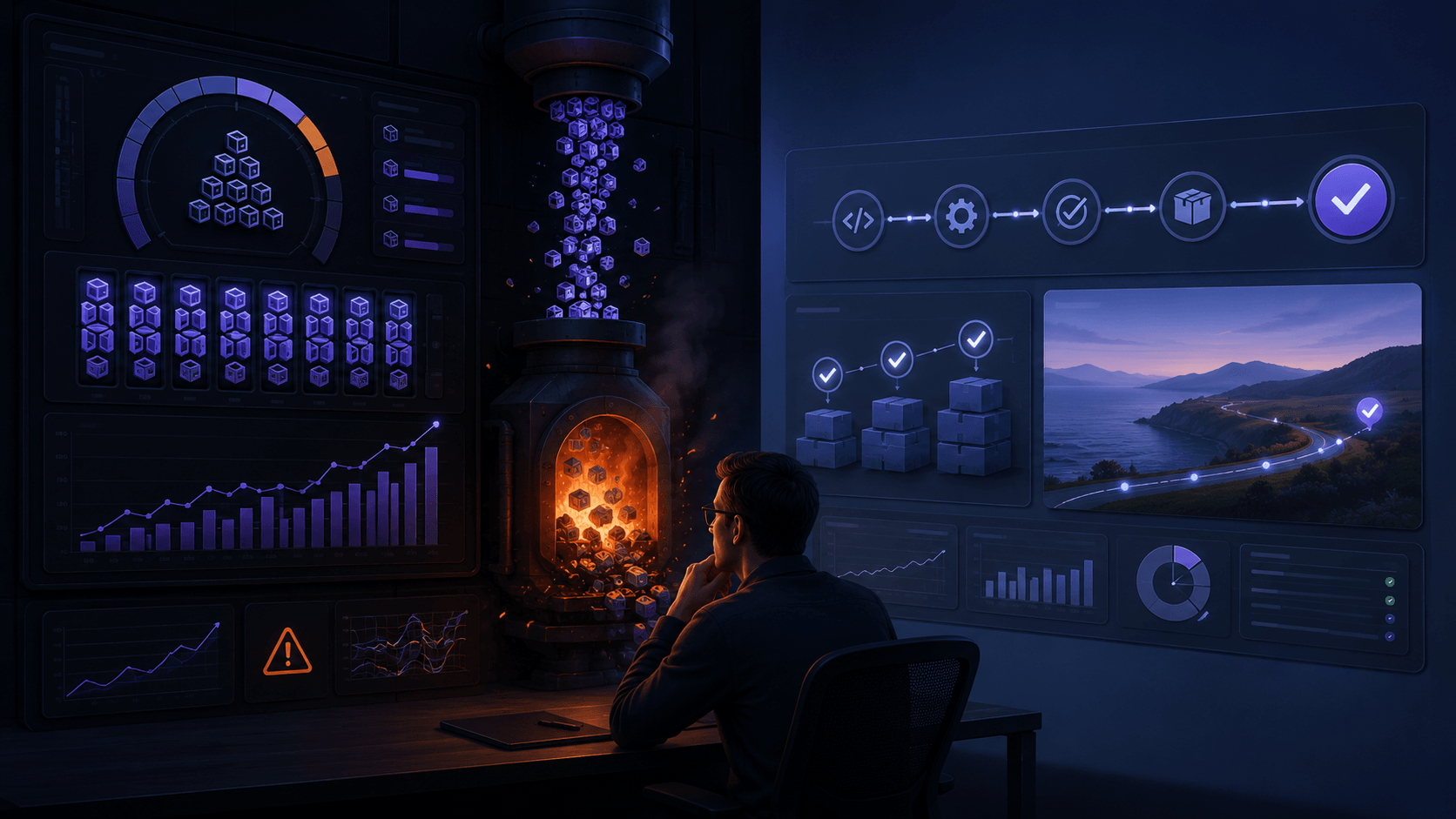

There’s a term making the rounds that you should know: tokenmaxxing.

It describes engineers competing to burn AI tokens under employer-imposed usage metrics. The concept exposes something structural about how companies measure AI adoption.

Here’s how it works: some companies offer generous token budgets as a recruiting perk. Then they start treating consumption as a productivity signal. This makes engineers optimize for the metric, not the outcome.

This is Goodhart’s Law in practice:

“When a measure becomes a target, it stops being a good measure.”

If you reward engineers for tokens burned, you get maximum tokens burned. Not the maximum value delivered.

The macro picture is what makes this worth paying attention to. There are estimates that $1 trillion in AI infrastructure spending is being priced against demand signals that are partially performative. This is being called the “$1 trillion blind spot.”

And it cuts both ways. The Shadow AI Economy has engineers using AI daily without it surfacing in any report. Meanwhile, tokenmaxxing inflates consumption numbers with artificial usage.

The market is reading demand that is overcounted on one side and undercounted on the other.

The provider split tells you how unsettled this still is:

- OpenAI is spending hundreds of billions betting the demand is real.

- Anthropic imposed consumption limits and cut off third-party tools that burned through tokens without meaningful use.

Two industry leaders. Two fundamentally different reads on the same signal.

If you lead an engineering team, I think you shouldn’t measure token consumption. Measure what your team ships. Because if the metric distorts the behavior, the behavior distorts the market.

What are you seeing in your organization? Is AI usage measured by outcome or by volume?