As mentioned in my previous post, I’m in São Paulo this week for the StartSe AI Festival 2026 (May 13 and 14, at Pro Magno Centro de Eventos).

For those joining now: this is the largest AI gathering in Brazil right now, around 4,000 people in the room, with a lineup that pulled global names like Replit, Amazon AGI, MIT, Glean, and Volkswagen alongside Brazilian operators and a healthy dose of practitioners.

As in the last one: one paragraph per talk, plus bullets for the points I’m keeping. Deeper write-ups on some talks are coming in the next few days.

Marcelo Echeverria, Country Manager at Replit Brasil, traced the path from Replit’s first agent (September 2024) to the vibe coding moment (term coined by Andrej Karpathy in February 2025, picked as Collins Word of the Year 2025). His core message: building software shifted from a developer-only activity to something the person closest to the problem can do, and the security surface scales just as fast.

- 75% of all new code at Google is now AI-generated (Cloud Next 2026). Replit went from US$ 3B to US$ 9B in six months. 85% of Fortune 500 companies have users on their platform.

- Andrew Ng keeps pushing back on the “AI ends programming” narrative, with newspaper headlines from early 2026 backing him up. More software gets built, and more demand for builders follows.

- The risk surface when non-developers ship at scale: API keys hardcoded, supply chain attacks, exposed prompts.

- Only 2% of the US population understands what vibe coding is today. That gap is the window.

Paulo Silveira, co-founder of Alura (Grupo Alun, which acquired StartSe in September 2025), made the case that the cost of producing a line of code has dropped to effectively zero. Despite that, the volume of software actually shipping has not exploded the way you would expect. His framing: software is fundamentally about deciding what to build, and that part still takes humans.

- Three steps for the “citizen developer” who is not a full-time engineer: master the domain, learn to orchestrate agents, and become a multi-skill professional.

- Sequoia’s “One Billion Developers” thesis: the universe of people producing software with technology goes from ~100M to 1B. New roles (Agent Ops, AI builder) get added to the mix.

- Robert Heinlein quote he leaned on: “Specialization is for insects.” The new market premium is applicable across disciplines.

- Pedro Franceschi (CEO of Brex): leaders need to operate at all levels. If you cannot judge whether your team’s output is good, agents do not save you.

- Storytelling and clarity of narrative remain leadership skills no model replaces. Inform-only managers are out.

Leonardo Zappa, Go-to-Market lead for Glean in Latin America, drew a parallel between 2022 and the current AI moment. His real point: the risk landscape has shifted. Failing to adopt AI was the 2022 problem. Adopting AI without discipline is the 2026 problem.

- Even the labs are now talking ROI. OpenAI launched ads in ChatGPT in February 2026. Anthropic is moving deeper into enterprise. If they care about the math, you should too.

- Stop rebuilding systems of record. Salesforce, your ERP, and your CRM survive AI because operational reliability does not go out of style. Agents need trustworthy data more than humans do.

- Model choice cannot be a free-for-all. If anyone on the team can pick Opus 4.7 for a Haiku-grade task, you will blow the budget before you measure the value.

- Context engineering is the new bottleneck. Hours spent on prompt engineering have hit diminishing returns.

A panel on AI-first companies that scaled past the pilot, moderated by Rodrigo Comazzetto (Partner at Crescera Capital) with Thiago Rached (CEO of Letrus) and José Caodaglio (founder of Colmeia), made the point that 95% of AI projects never make it to production, and the survivors share one trait: they started from a real problem and let the technology come second. Letrus is a Brazilian edtech that was a 2019 laureate (announced in 2020) of UNESCO’s King Hamad Bin Isa Al-Khalifa Prize for the Use of ICT in Education, on the theme of AI in education. Colmeia operates conversational and CDP infrastructure for large Brazilian enterprises.

- Letrus’ MIT-funded RCT: students using their writing platform for five months moved from 8th to 2nd in the Enem writing ranking among Brazilian states.

- Colmeia’s architecture choice: many small prompts orchestrated by a system they call “archetypes,” instead of one large prompt. Lower hallucination rate, easier to scale.

- The Air Canada chatbot lawsuit came up as a B2C cautionary tale. The bot promised a bereavement discount; the tribunal made the airline honor it.

- Per the panel, two-thirds of developers laid off in AI-related cuts have already been rehired, often via offshore operations.

- Patient capital lets founders make bold decisions. Both Letrus and Colmeia credited that as a major part of the survival path.

Ana Trišović, Research Scientist at the MIT FutureTech Lab, presented hard data from a study of 268,000 research papers and almost 2,000 foundation models. Her headline number: the gap between the frontier model being built and the median model being adopted in production is now 22x, up from 1.8x five years ago. The model on the magazine cover is not the model creating value.

- Adoption strategy beats model selection. The gap (or the value) sits in your pipeline, your data, and your fine-tuning workflow. The API you call is replaceable.

- 81% of scientific AI users run open-weight models. Open dominates the install base. Closed models grow faster (423% annually between 2020 and 2023), but mostly at the capability frontier.

- Three failure modes she sees repeatedly: picking models by benchmark alone, treating AI as a procurement problem, and betting your architecture on a single model.

- For Brazilian firms specifically: fine-tune open models on Portuguese text, Brazilian medical data, or your specific domain. That is something no API gives you.

- Assume the model you are using today is obsolete in 24 months or less. Build accordingly.

Felipe Blanes, Senior Technical Program Manager at Amazon AGI Lab, came in from Boston to talk about reliability. He leads the customer enablement program for Amazon Nova Act, the agent SDK that drives actions in browsers. His central observation: agents have crossed the intelligence threshold. Reliability (same input, same output, hundreds of times in a row) is now the gating constraint.

- Most agents in the wild run at ~55% reliability. Enterprises need 90% to 99% before they trust production. Two recent changes closed the gap: models got more reliable, and developer tools matured from “months to build an agent” to “hours.”

- The 90/10 rule: 90% of business problems can be solved with deterministic code and traditional APIs. Reserve agentic AI for the 10% that genuinely needs it. Agents are too expensive to spray everywhere.

- Real production cases: Hertz cut release time 5x with AI-driven QA, Sola runs 100,000+ workflows per month for process automation, 1Password uses agents to keep its login database current across thousands of sites.

- The four-layer agent stack: your application, agentic services (the harness), foundation models, chips. Every layer improving over time compounds the reliability gains.

- Future arc: isolated tasks today, then trusted workflows, then agent-to-agent collaboration, then the “coworker” agent that adapts and knows when to ask for help.

Cristina Cestari, CIO of Volkswagen for South America, presented Otto, the first generative AI assistant developed by them in Brazil, currently shipping inside the Volkswagen Tera SUV. Otto runs on Google Vertex with Gemini at the core, plus Picovoice for wake-word activation and Eleven Labs for text-to-speech, and was built in partnership between VW Tech, the Volkswagen Americas design team, and Accenture.

- Therapy and companionship were the number one consumer Gen AI use case in Harvard Business Review’s 2025 ranking. Otto is built on that bet. The car becomes a companion that travels with the customer’s life.

- Strict guardrails. Otto is a Volkswagen assistant. It will not speak ill of competitors. Responsible AI is built in as a design constraint, treated with the same weight as performance targets.

- Connected APIs make the difference. Otto reads vehicle telemetry and can tell you: “your range is 200 km, the trip is 700 km, plan a fuel stop.” Calendar, weather, maps, and Spotify all wired in.

- Cristina’s broader point for traditional companies: the hardware lifecycle is now shorter than the AI lifecycle. Devices change. The trained models persist. Plan for that inversion.

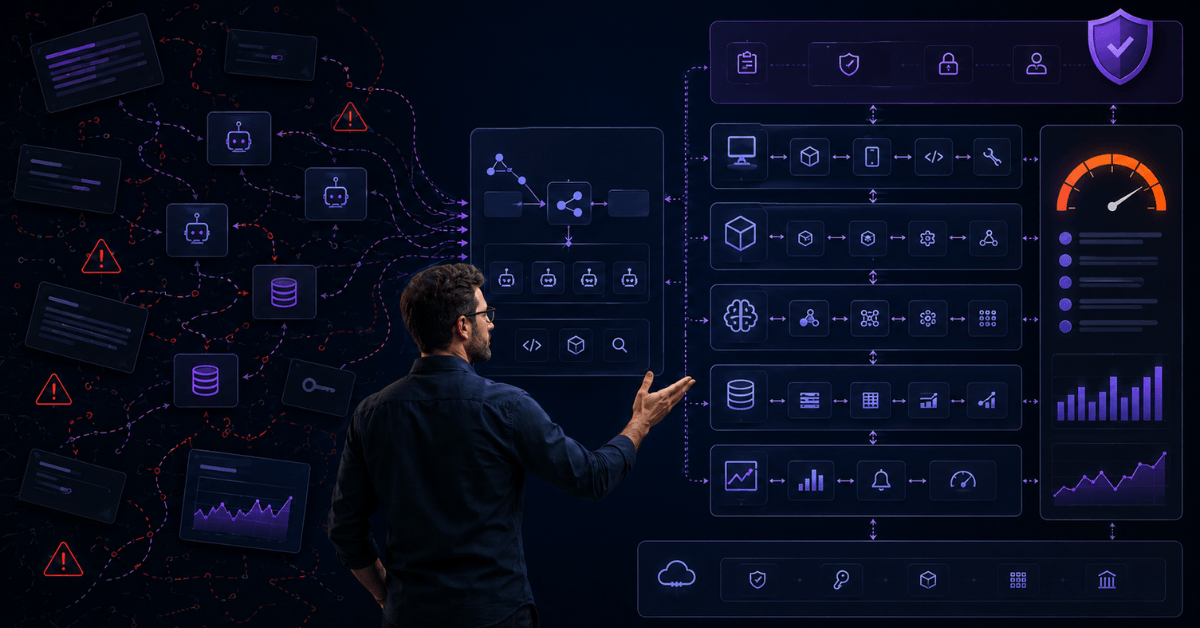

Carlos Octávio Queiroz, VP of Technology at LG Lugar de Gente (previously Director of Architecture, AI, and Partnerships at Globo), presented a practitioner’s framework for actually rolling out an AI program inside a company. His thesis: the hard part is organizational architecture, governance, and culture. Technology comes after that.

- Seven pillars he debates and approves with leadership: ambitions, use cases, technology, orchestration, culture, principles, and risks.

- Five prioritization criteria he uses: new revenue, increased revenue, customer experience, knowledge gain, and cost reduction.

- Hub and spoke governance. The center of expertise enables the business areas to ship AI. Centralize the foundation and the patterns. Decentralize the building.

- Without a real data foundation, AI initiatives will be short flights. Context (the company’s actual processes, decisions, and history) is where differentiation lives. Generic prompt engineering isn’t enough anymore.

- FinOps for AI is non-negotiable. Uber exhausted its 2026 AI budget in four months, mostly on Claude Code (in a company that spent US$ 3.4 billion on R&D in 2025). Be the company that knows where the tokens went.

Day 2 closes the festival. Deeper write-ups on some specific topics are coming in the next few days.