A few years back, the question “compile to native or stay on the JVM?” had a short answer: it depended on whether you needed sub-100ms cold start as part of the SLA. If you did, you went GraalVM Native Image and paid the price in reflection metadata, dynamic class loading limitations, and tooling that was still maturing. If you did not, you stayed on the JVM and stopped thinking about it.

That question is the wrong one. Anyone still deciding between Native Image and the JVM purely on the basis of “which one wins on benchmark X” has missed what changed.

What changed: Project Leyden actually shipped

Project Leyden, which until JDK 24 shipped in March 2025 was still mostly conference talks and design notes, has shipped real JEPs into production releases. The official Project Leyden page lists four delivered:

- JEP 483: Ahead-of-Time Class Loading & Linking in JDK 24

- JEP 514: Ahead-of-Time Command-Line Ergonomics in JDK 25

- JEP 515: Ahead-of-Time Method Profiling in JDK 25

- JEP 516: Ahead-of-Time Object Caching with Any GC in JDK 26

The starting-point benefit is the one JEP 483 delivered in JDK 24 and that JEP 516 cites as background:

“the Spring PetClinic demo application starts 41% more quickly in production because the cache enables some 21,000 classes to appear already loaded and linked when the application starts.”

A 41% faster cold start on Spring PetClinic, with the full JVM still in place at runtime — no rewriting, no reachability metadata, no GC switch. JEP 516’s own contribution, in JDK 26, is to extend that benefit to ZGC users, who previously had to choose between the AOT cache and ZGC’s low tail latency.

JEP 516, which landed in JDK 26 (GA on March 17, 2026), closed the last operational gap for Leyden in production: the AOT cache now works with any garbage collector, including ZGC. Before that, teams who needed low tail latency via ZGC had to give up the AOT cache. That trade-off is gone.

Where Native Image stands

Native Image is still doing what it has always done well: true AOT compilation, standalone binary, sub-100ms boot, memory footprint substantially smaller than the JVM. It is still the right call for serverless functions, scale-to-zero workloads, tiny container images, and edge deployments.

There is one detail enterprise teams keep missing, though: PGO (Profile-Guided Optimization), the mechanism GraalVM uses to close part of the runtime throughput gap between AOT and JVM, is not available in GraalVM Community Edition. The official documentation is direct about it:

“Note: PGO is not available in GraalVM Community Edition.”

PGO ships in Oracle GraalVM, which since June 2023 has been distributed under the GFTC license, permitting free use including commercial production. That changes the math: if your strategy depends on PGO to close the throughput gap, you are committing to Oracle GraalVM. If you are running pure community OpenJDK builds, PGO is not part of your toolbox.

The rest of the Native Image discipline still applies: reachability metadata for reflection, the GraalVM Native Image agent for capturing config from integration tests (in Quarkus projects, run integration tests under the agent via -Dquarkus.test.integration-test-profile=test-with-native-agent, then feed the captured configuration into the native build with -Dquarkus.native.agent-configuration-apply), and the engineering effort of knowing what your application actually does at runtime so the AOT compiler can do its job.

How to decide between them (without marketing benchmarks)

The criterion is no longer “which one wins on microbenchmark X.” It is a platform call:

Go with Native Image when:

- The workload is serverless or scale-to-zero and a sub-100ms cold start is part of the SLA, not a nice-to-have.

- Container footprint matters more than long-running throughput (edge, FaaS, sidecar).

- The application has contained reflection and stable reachability metadata (LangChain4j, Quarkus extensions, frameworks with first-class Native Image support).

- The team can run Oracle GraalVM in production and has the operational capacity to implement PGO with a representative workload.

Stay on the JVM with Leyden when:

- The workload is long-running and what matters is peak throughput, not cold start.

- The application depends on libraries that do heavy dynamic class loading, runtime Java agents, or hot reload during development.

- You want measurable cold start improvement (think the 41% on PetClinic) without rewriting anything and without changing GC.

- The team is on community OpenJDK and prefers to stay there.

These are not mutually exclusive. In the enterprise Java systems I work with, mature platforms run the main service on the JVM with Leyden’s AOT cache, and push specific workloads (functions, ephemeral jobs, edge handlers) to Native Image. The decision is per workload, not for the whole stack.

The detail nobody talks about: the training run

Both Native Image (with PGO) and Leyden (with the AOT cache) depend on a training run. That training run has to be representative of the production workload, otherwise what you are optimizing is the wrong path.

The official Native Image PGO documentation is explicit:

“the goal is to gather profiles on workload that match the production workloads as much as possible. The gold standard for this is to run the exact same workloads you expect to run in production on the instrumented binary.”

For Leyden, the training run executes on a regular JVM with the right flags and produces the AOT cache populated with pre-loaded classes and profiles. In both cases, if the training run is a thin synthetic test, the production gain will be equally thin. In some cases, it can be worse than not doing AOT at all.

For teams moving from stage 1 (local tests) to stage 2 (CI/CD-driven optimization), that means investing in repeatable production-like workloads: traffic shadowing, anonymized payload replay, load tests that genuinely exercise the hot paths. It is platform work, not a feature ticket.

Concretely: take a Quarkus catalog API where the traffic splits 70/25/5 (search, lookup by id, checkout). The training script reproduces that ratio:

# training-workload.sh

BASE="${1:-http://localhost:8080}"

end=$((SECONDS + 180))

while [ $SECONDS -lt $end ]; do

curl -sf "$BASE/products/search?category=electronics" >/dev/null &

curl -sf "$BASE/products/$((RANDOM % 1000))" >/dev/null &

if [ $((RANDOM % 20)) -eq 0 ]; then

curl -sf -X POST "$BASE/cart/checkout" \

-H "Content-Type: application/json" \

-d '{"items":[{"id":42,"qty":1}]}' >/dev/null

fi

sleep 0.05

done

wait

Feed it to Leyden (JDK 25+, JEP 514 ergonomics):

java -XX:AOTCacheOutput=app.aot -jar app.jar & PID=$!

sleep 10 && ./training-workload.sh

kill -TERM $PID && wait $PID

# production

java -XX:AOTCache=app.aot -jar app.jar

Or to Native Image PGO (Oracle GraalVM):

native-image --pgo-instrument -jar app.jar -o app-instr

./app-instr & PID=$!

sleep 10 && ./training-workload.sh

kill -TERM $PID && wait $PID # writes default.iprof

native-image --pgo=default.iprof -jar app.jar -o app

Same script, two consumers. That script (not the flag) is the platform asset. It lives in the repo, gets reviewed like code, and gets updated when the traffic shape changes.

Practical layout for an enterprise Java project

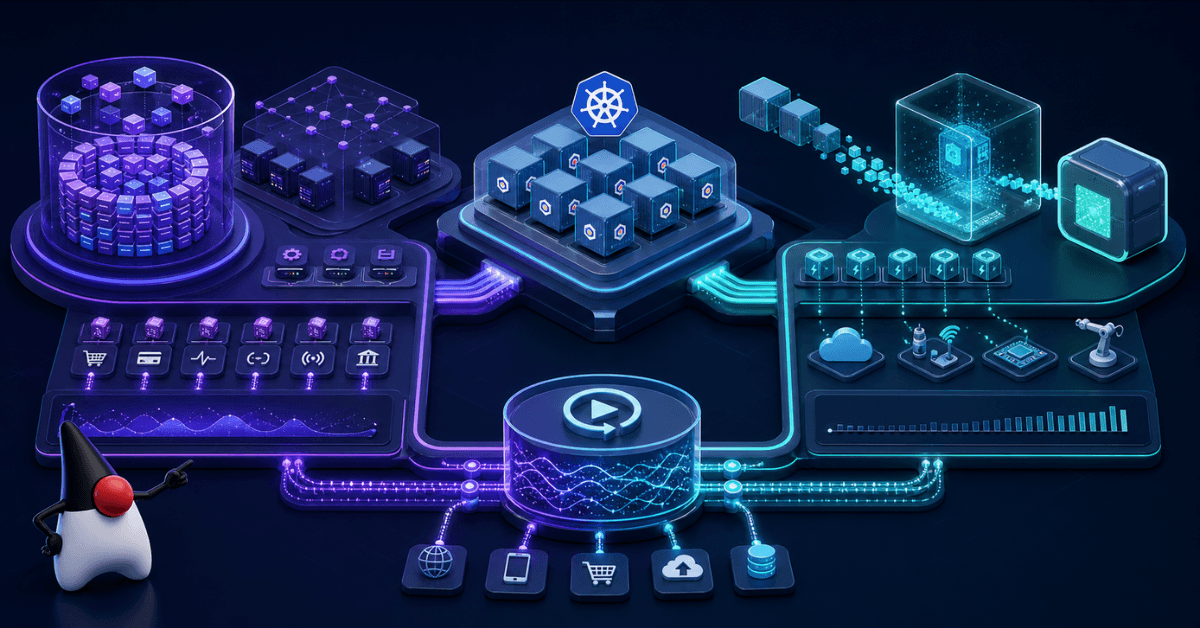

A concrete pattern that holds up well in production: a Quarkus application running on Kubernetes, mixed workload of synchronous REST plus background jobs plus a few endpoints that turn into functions under load.

A reasonable layout:

- Application core: Quarkus on the JVM (OpenJDK 26+) with Leyden’s AOT cache via JEP 483 plus JEP 515. Cold start gain without losing JIT at runtime.

- Cold endpoints and ephemeral jobs: same code, native build through Quarkus and GraalVM Native Image, deployed separately as functions. PGO is optional and only kicks in if the team is on Oracle GraalVM.

- GC: ZGC if tail latency is the binding constraint (and now compatible with the AOT cache via JEP 516); G1 if average throughput is what matters.

- Training run: part of the CI/CD pipeline, with a production-replica workload versioned alongside the code.

That is what cloud-native looks like without the noise: each workload on the right tool, no need to commit to a single path for the entire system.

Conclusion

The choice between GraalVM Native Image and Project Leyden is no longer ideological. Both paths matured, both closed their main gaps, and each one has a clear best-fit scenario. Anyone still framing the decision as “which one is the future of Java” has missed the shift: both shipped, and each one fits a different workload.

The criterion is architectural, not driven by hype. Know your workload, pick the right tool, and invest in your training run with the same care you put into the code.

If you are in this decision right now, or you already shipped one of these into production, what was the workload that pushed you in one direction or the other? Drop a comment with what you saw on your side. And if this post helped you sharpen the trade-off, share it with someone planning a platform upgrade this year.