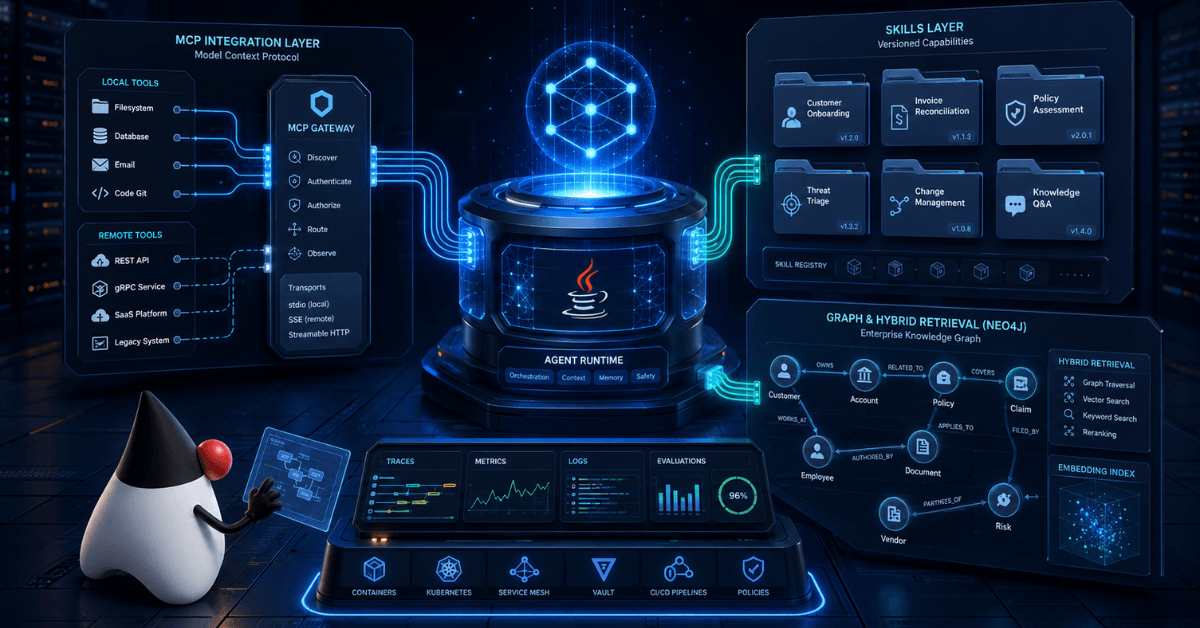

Three things landed in the enterprise plumbing in the past few weeks. At Knowledge 2026 (May 5), ServiceNow announced Action Fabric, an open MCP (Model Context Protocol) server, building on top of the Now Assist MCP Server Console that has been GA since the Zurich release earlier this year. Jama published its own MCP Server. Google promoted MCP Toolbox for Databases to v1.0 in April, consolidating its Java SDK (released in March) alongside the existing Python, Go, JavaScript, and TypeScript clients. Quietly, in parallel, the Quarkiverse team kept shipping the 1.9.x line of Quarkus LangChain4j with three extensions that change what a Java architect can put on top of that new plumbing: quarkus-langchain4j-mcp, quarkus-langchain4j-skills, and quarkus-langchain4j-neo4j.

The combination of these two waves (vendors moving MCP to GA infrastructure, and Quarkiverse closing the Java side with first-class extensions) is the clearest signal that enterprise AI agents on Java have stopped being an academic exercise and become an architecture decision.

The market signal: MCP is now plumbing, not a preview

If you have been tracking the Java AI ecosystem over the past two years, you saw MCP show up as just another acronym. The picture today is different. ServiceNow describes its own MCP Server as a native piece of Now Assist, governed by AI Control Tower, with managed OAuth, audit trails, observability, and consumption metering. Full OAuth 2.1 support and the broader MCP security model (PKCE, least-privilege, human-in-the-loop consent) are on ServiceNow’s roadmap as the implementation matures. Jama published its MCP Server as the official integration surface for external agents. Google shipped the MCP Toolbox for Databases in Java, no longer a Python-only project.

When vendors of that profile start treating MCP as GA rather than as a preview, the architect’s job changes. It is no longer “let me try this in a PoC“. It becomes: “how do I integrate the vendor’s MCP server with the agent that runs inside my cluster, with the observability, identity model, and build pipeline I already have in production?”

That gap (between the MCP server a vendor publishes and the agent that has to live inside your runtime, under your rules) is exactly what the Quarkus LangChain4j 1.9.x line was built to close.

What the three extensions actually deliver

The first thing that matters is to understand what each extension does, and why these are not syntactic sugar over the LangChain4j core library.

quarkus-langchain4j-mcp is a first-class MCP client, configurable through application.properties, with support for STDIO, HTTP, and Streamable HTTP transports. Instead of constructing an McpClient by hand and managing its lifecycle yourself, you declare each MCP server as configuration:

quarkus.langchain4j.openai.api-key=${OPENAI_API_KEY}

# Local MCP server over STDIO

quarkus.langchain4j.mcp.filesystem.transport-type=stdio

quarkus.langchain4j.mcp.filesystem.command=npm,exec,@modelcontextprotocol/server-filesystem,/srv/docs

# Remote MCP server over Streamable HTTP

quarkus.langchain4j.mcp.servicenow.transport-type=streamable-http

quarkus.langchain4j.mcp.servicenow.url=https://mcp.servicenow.example.com/mcp

To wire this into an AiService, the integration is declarative. The extension exposes the @McpToolBox annotation, which lets the agent dynamically discover and call the tools published by the named MCP servers:

import io.quarkiverse.langchain4j.RegisterAiService;

import io.quarkiverse.langchain4j.mcp.runtime.McpToolBox;

import dev.langchain4j.service.SystemMessage;

import dev.langchain4j.service.UserMessage;

@RegisterAiService

public interface IncidentAssistant {

@SystemMessage("""

You are an operations assistant that helps SREs

investigate incidents in an enterprise environment.

""")

@McpToolBox({"servicenow", "filesystem"})

String investigate(@UserMessage String question);

}

That is less code than a typical MCP “hello world”, and it ships with Dev Services, hot reload, integration with quarkus.tls.*, and binding for native compilation. That last sentence is the part that does not show up when you compare snippets side by side.

quarkus-langchain4j-skills is the second piece that changes the picture. It loads skills defined as subdirectories, each containing a SKILL.md file with YAML front matter (the open Agent Skills format originally introduced by Anthropic). The extension exposes the loaded skills through two complementary CDI beans: a SkillsToolProvider that publishes the skills as tools to any AI service using a ToolProvider, and a SkillsSystemMessageProvider that injects the skills’ descriptions into the agent’s system message. You annotate the AI service with the right system message provider and you are done:

import io.quarkiverse.langchain4j.RegisterAiService;

import io.quarkiverse.langchain4j.skills.SkillsSystemMessageProvider;

@RegisterAiService(systemMessageProviderSupplier = SkillsSystemMessageProvider.class)

public interface IncidentAnalyst {

String analyze(String context);

}

quarkus.langchain4j.skills.directories=skills,classpath:other-skills

The first release supports only the tool mode of skills, but that is already enough to move operational playbooks out of Java code and into a versioned, reviewable artifact that lives next to the agent. The skill becomes a configuration artifact, reviewed in pull requests, deployed alongside the binary.

The third piece, quarkus-langchain4j-neo4j, is the embedding store extension built on top of the official quarkus-neo4j driver. For teams that already use Neo4j as a domain graph, this is the door into hybrid retrieval (vector plus relationship) without standing up a parallel stack just for RAG. The extension inherits Dev Services from quarkus-neo4j, so your local environment boots itself.

What changes in production

In the enterprise Java systems I have worked with over the years, the gap between “we have AI in the codebase” and “AI runs in production, inside my SLA, under my control” is almost always the rest of the platform. Native compilation, observability, identity, build pipeline, FinOps. That is where the Quarkus + LangChain4j stack stops being a stylistic preference and starts being a concrete recommendation.

When the agent runs in Quarkus, metrics and traces plug into Micrometer and OpenTelemetry as soon as the quarkus-micrometer and quarkus-opentelemetry extensions are added. That is the same path the rest of your REST and messaging code already uses. The MCP tool invocation lands in the same trace as the HTTP request that triggered the agent. This is verifiable from the extension code: it is a standard Quarkus extension, registered through CDI, and it respects whatever interceptors are already installed.

Native compilation is supported across the stack. That changes the cost calculation: an agent in a serverless container or a cold-start critical path can run as a native image, with a small binary and fast startup, without losing the MCP client, the RAG layer, or the CDI service. There is still work to do around reachability metadata for libraries that lean heavily on reflection, but the Quarkus LangChain4j stack does not push you into that swamp by default.

And the argument that lands hardest in procurement conversations: Java enterprise is not the environment where “we need a Python sidecar for AI“. The Python sidecar is elegant for prototyping, and nothing stops you from running Python agents in parallel. What is hard to defend today is keeping the enterprise side of the agent (workflow, identity, observability, deploy) in a runtime that is not the rest of the house.

My take on the honest trade-off

I want to be direct about real trade-offs, because hand-waving about “Java is better” does not help anyone.

These three extensions are still labeled preview in the Quarkus extension catalog, which is the honest caveat: APIs can shift before they hit GA. The integration story is solid; the version contract is still moving.

The Python ecosystem still has more third-party integrations ready off the shelf. Frameworks like LangGraph and CrewAI have examples for almost any scenario, from a DevDay demo to a sample repository.

In almost every other axis that matters to a company running Java in production at scale (compliance, identity, observability, reuse of build pipelines, runtime FinOps, integration with corporate IDP and IAM), the Quarkus LangChain4j path saves months of platform work. And once MCP became the integration boundary with enterprise vendors, the “Python has more examples” argument stopped being decisive. The MCP server that ServiceNow or Jama publishes is the same server for any client that conforms to the spec. The Java client consumes it with the same fidelity.

My practical recommendation: if your team already runs Java in production and is looking to put an agent in the product today, start with the Quarkus LangChain4j stack. Keep Python for experimentation or for embedding ETL. Do not duplicate platform just to follow a trend.

Wrapping up

The picture is in focus: MCP is the default integration protocol between an agent and an enterprise vendor, and the Quarkus + LangChain4j stack closed the Java side with three extensions that take platform work off the architect’s desk. A declarative MCP client driven by application.properties, skills loaded as configuration artifacts, and a Neo4j embedding store with Dev Services. All on top of the runtime your company already operates.

If you are a Staff Engineer or an architect still selling agentic AI on Java to your team, now is a good time to open the repository and bring up your first @McpToolBox against a local MCP server. The hard platform work is no longer the blocker. The decision is.

What is the friction holding back agentic Java on your side: the runtime, the integrations, or the procurement story? Drop a comment, share this with the architect on your team who is still treating MCP as a preview, and let me know what you would like to see covered next.